Sorting shows up in everything from database joins to the lists users scroll through on a phone; small choices in sorting can change responsiveness and resource use across an application. Knowing how algorithms behave helps you match performance needs to real workloads.

There are 20 Examples of Sorting Algorithms, ranging from American Flag sort to Timsort. For each entry you’ll find Time (avg/worst),Space (aux),Stability so you can compare runtime behavior, memory needs, and whether relative order is preserved — helpful when sorting multi-key records; you’ll find the full list below.

How do I choose the right sorting algorithm for my data?

Consider input size, whether the data is partially sorted, memory limits, and if you need stability. For general-purpose use on varied inputs Timsort is a safe default; for integer or fixed-key data, radix-style approaches (like American Flag) can be faster and more memory-efficient. If worst-case guarantees matter, prefer mergesort or introsort; if memory is tight, favor in-place algorithms.

When does stability matter and which sorts here are stable?

Stability matters when equal-key items must keep their original order—common in multi-key sorts or preserving timestamps. Stable options include merge-based and adaptive sorts (e.g., mergesort, Timsort, insertion), while quicksort and heapsort are typically unstable; check the Stability column below for each algorithm.

Examples of Sorting Algorithms

| Name | Time (avg/worst) | Space (aux) | Stability |

|---|---|---|---|

| Bubble sort | O(n^2) / O(n^2) | O(1) | Stable |

| Cocktail Shaker sort | O(n^2) / O(n^2) | O(1) | Stable |

| Comb sort | O(n^2) / O(n^2) | O(1) | Unstable |

| Insertion sort | O(n^2) / O(n^2) | O(1) | Stable |

| Selection sort | O(n^2) / O(n^2) | O(1) | Unstable |

| Merge sort | O(n log n) / O(n log n) | O(n) | Stable |

| Timsort | O(n log n) / O(n log n) | O(n) | Stable |

| Quick sort | O(n log n) / O(n^2) | O(log n) | Unstable |

| Introsort | O(n log n) / O(n log n) | O(log n) | Unstable |

| Heap sort | O(n log n) / O(n log n) | O(1) | Unstable |

| Shell sort | Depends on gap; typical O(n log^2 n) / O(n^2) | O(1) | Unstable |

| Radix sort (LSD) | O(n·k) / O(n·k) | O(n + k) | Stable (LSD variant) |

| Counting sort | O(n + k) / O(n + k) | O(n + k) | Stable |

| Bucket sort | O(n + k) / O(n^2) | O(n + k) | Stable (typical) |

| Smoothsort | O(n log n) / O(n log n) | O(1) | Unstable |

| Cycle sort | O(n^2) / O(n^2) | O(1) | Unstable |

| Pancake sort | O(n^2) / O(n^2) | O(1) | Unstable |

| Bitonic sort | O(n log^2 n) / O(n log^2 n) | O(1) | Unstable |

| American Flag sort | O(n·k) / O(n·k) | O(k) | Unstable |

| Gnome sort | O(n^2) / O(n^2) | O(1) | Stable |

Images and Descriptions

Bubble sort

Simple comparison sort that repeatedly swaps adjacent out-of-order items until the list is sorted. Easy to understand and implement but very slow on large inputs; useful for teaching, tiny arrays, and detecting nearly-sorted data because of its adaptive behavior.

Cocktail Shaker sort

Bidirectional variant of bubble sort that passes through the list forwards and backwards, moving large and small items toward ends each sweep. Slightly faster than bubble in practice on some data, mainly of educational or small-array use.

Comb sort

Improves bubble by using a shrinking gap to compare distant elements, eliminating turtles (small values near end) faster. Simple, practical for moderate sizes, but still O(n^2) worst-case; a handy teaching example and small improvement over naive bubble.

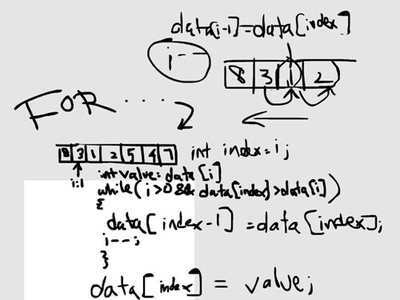

Insertion sort

Builds a sorted prefix by inserting each new item into its correct position, performing well on small or nearly sorted lists. Very low overhead, stable, and often used inside hybrid sorts for small subarrays or when adaptive performance matters.

Selection sort

Selects the minimum (or maximum) repeatedly and places it into position, requiring minimal swaps. Simple and easy to reason about but quadratic time and not adaptive; useful when write operations are expensive because it minimizes data movement.

Merge sort

Divide-and-conquer algorithm that recursively splits data and merges sorted halves; predictable O(n log n) time and stable if merge is implemented stably. Excellent for large datasets, external sorting, and parallelization though requires extra auxiliary space.

Timsort

Hybrid stable sort used in Python and Java that detects runs and merges them using insertion sort and merge sort techniques. Very good practical performance on real-world data, adaptive to existing order and efficient in memory for many workloads.

Quick sort

Partition-based divide-and-conquer sort that chooses pivots to split arrays, offering excellent average-case O(n log n) performance and low auxiliary space. Fast in practice but worst-case O(n^2) unless guarded by randomization or hybrid fallbacks.

Introsort

Hybrid approach that starts with quicksort and switches to heapsort when recursion depth grows, ensuring O(n log n) worst-case time. Combines quicksort’s practical speed with heapsort’s guarantees; commonly used as the default implementation in standard libraries.

Heap sort

Uses a binary heap to repeatedly extract the maximum and build a sorted array in-place, guaranteeing O(n log n) time without extra significant memory. Not stable, but useful when worst-case time bounds and in-place operation are required.

Shell sort

An in-place generalization of insertion sort using decreasing gap sequences to move elements quickly toward their final positions. Complexity depends heavily on the gap sequence; practical and compact, it’s faster than plain insertion on medium-sized arrays.

Radix sort (LSD)

Non-comparative integer or string sort that processes keys by digit or character positions (least-significant digit first). Runs in linear time relative to input size and key length; very fast for fixed-size keys but needs extra space and stable digit-level routines.

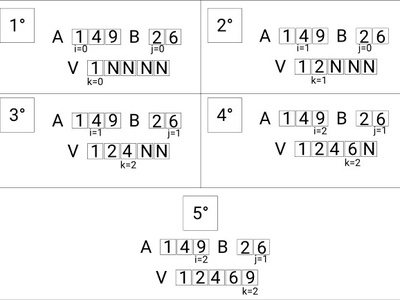

Counting sort

Distribution sort that counts occurrences of keys to compute positions, achieving O(n + k) time where k is key range. Extremely fast and stable for small integer ranges, often used as a subroutine in radix sort or when key domain is limited.

Bucket sort

Partitions data into buckets and sorts each bucket individually, ideal when input is uniformly distributed. Average-case linear time with appropriate bucketing; practical for floating-point or uniformly random data but worst-case degrades to quadratic.

Smoothsort

Dijkstra’s in-place comparison sort that’s adaptive to existing order with O(n log n) worst-case and near-linear behavior on nearly-sorted inputs. More complex to implement than heapsort; notable for minimizing work on mostly-sorted arrays without extra memory.

Cycle sort

Minimizes the number of writes by rotating elements into their final positions, achieving minimal memory writes and O(n^2) time. Useful in contexts where write operations are costly (e.g., EEPROM) but impractical for large datasets due to quadratic time.

Pancake sort

Sorts by repeatedly flipping prefixes (like sorting pancakes by spatula), using only prefix-reversal operations. Mostly a theoretical and puzzle-focused algorithm with O(n^2) worst-case time; interesting for algorithmic proofs and constrained-operation problems.

Bitonic sort

Comparator-network-based parallel-friendly sort that constructs bitonic sequences and merges them; used in hardware and parallel algorithms. Time O(n log^2 n) with regular data movement patterns, suitable for GPUs and sorting networks though not optimal for sequential general-purpose sorting.

American Flag sort

In-place MSD radix-like distribution sort efficient for variable-length keys like strings, grouping by digit buckets and recursing. Practically fast and cache-friendly for many inputs, but implementation complexity and unstable behavior can be downsides.

Gnome sort

Simple insertion-like algorithm that walks back swapping out-of-order neighbors until elements are in place. Easy to implement and stable but quadratic time in general; useful mainly as a teaching example or tiny-scope utility for almost-sorted data.