In 2012 a convolutional neural network called AlexNet cut the ImageNet error rate dramatically and helped launch the modern era of deep learning.

That single result showed how an architecture choice plus lots of labeled data could change what machines do.

People should care because these systems boost productivity, power features they already use like camera scene detection and translation, and shape high-stakes decisions in medicine and business.

Deep neural networks rest on simple math but give surprising capabilities and real trade-offs.

This piece lays out eight clear facts about deep learning that explain how these models work, where they shine, and what challenges remain, grouped into three thematic sections: Foundations, Applications & Impact, and Challenges & Future.

Foundations: What Deep Learning Is and How It Works

Architecture, training, and data are the backbone of modern AI models. The next points explain core mechanics in plain language and why they matter in practice.

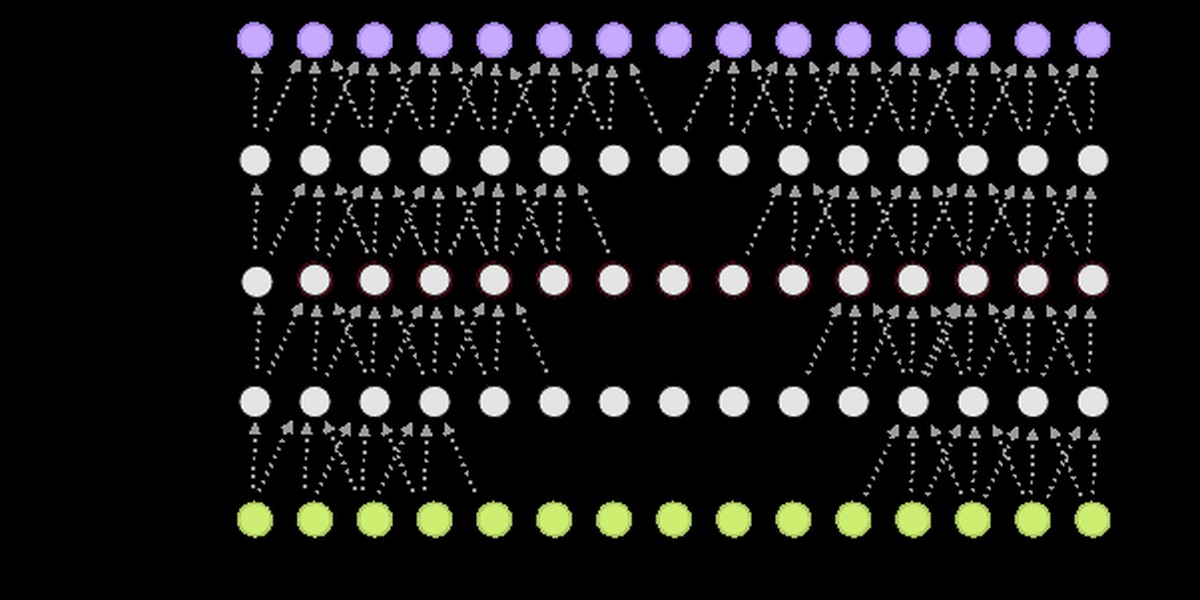

1. Neural networks are layered systems that learn representations

Neural networks stack layers of simple units called neurons that transform raw inputs into higher-level features.

Each connection has a numeric weight; training adjusts those weights so the composed layers approximate a desired function. The perceptron was an early single-layer idea, but depth—many layers—lets nets learn increasingly abstract patterns.

AlexNet (Alex Krizhevsky, 2012) illustrated the practical power of deeper convolutional architectures. It had roughly 60 million parameters and trained on the ImageNet dataset of about 1.2 million labeled images, enabling big gains in object recognition that now appear in smartphone cameras and social-photo tagging.

2. Training uses gradient descent and backpropagation to tune millions of weights

Learning means adjusting numeric weights using backpropagation, the algorithm popularized in 1986 by Rumelhart, Hinton, and Williams.

Practically, optimization runs gradient descent (or its variants) across many passes over data. Common hyperparameters include learning rate, batch size, and number of epochs.

Many image models train for on the order of 10–100 epochs; training time ranges from hours to weeks depending on model size and hardware. GPUs (commonly NVIDIA) and TPUs cut runtimes dramatically, but faster training can require careful tuning to remain stable.

3. Data quantity and quality often matter more than clever architectures

More and better-labeled data typically improve performance more than incremental architectural tweaks. ImageNet’s ~1.2 million labeled images was a turning point for vision, and modern language models train on corpora measured in terabytes.

Curating labels is costly, and poor data breeds bias and fragility in downstream tasks. That reality drives practices like dataset auditing and careful labeling pipelines.

Pretraining and transfer learning help: a model trained on massive, general data can be fine-tuned on smaller, task-specific sets, reducing the need for huge labeled collections in every new application.

Applications and Impact: Where Deep Learning Shows Up

Foundational capabilities—pattern recognition from images and text—translate into products people use every day. The following examples show vision, language, and scientific use cases with concrete milestones.

4. Vision systems power cameras, manufacturing, and autonomous vehicles

Convolutional networks and their variants drive tasks such as image classification, object detection, and segmentation. Benchmarks like ImageNet pushed rapid gains in accuracy that translated to real deployments.

Smartphone camera scene recognition and low-light enhancement use these models to pick exposure or apply filters. Manufacturing lines use vision-based defect detection to catch faulty parts at high throughput.

Perception stacks in autonomous systems (for example, Waymo and Tesla’s Autopilot perception modules) rely on neural nets to detect lanes, vehicles, and pedestrians. On specific benchmarks, models have surpassed human accuracy, though generalization to every road condition remains challenging.

5. Language models changed how machines understand and generate text

Transformer architectures and large-scale pretraining reshaped NLP. BERT (Google, 2018) improved contextual embeddings for understanding, while GPT-3 (OpenAI, 2020) showed fluent text generation with ~175 billion parameters.

These models improved search relevance, machine translation quality, summarization, and conversational agents. Many products now use embeddings for semantic search and transformers for text completion.

Limitations persist: models can hallucinate facts, require fine-tuning for specific tasks, and need guardrails to avoid generating unsafe or biased content. For credibility, consult original papers like BERT (2018) and the GPT-3 technical report.

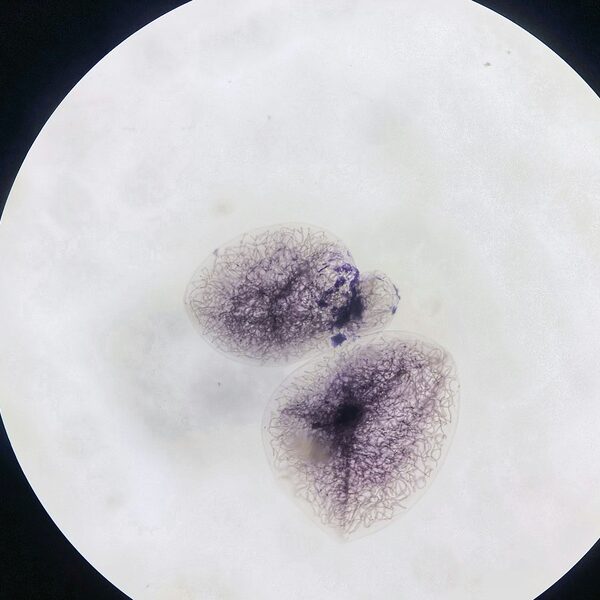

6. Deep learning speeds up discovery in healthcare and the sciences

Deep models complement human expertise in diagnostics and research by spotting patterns at scale and accelerating routine analyses.

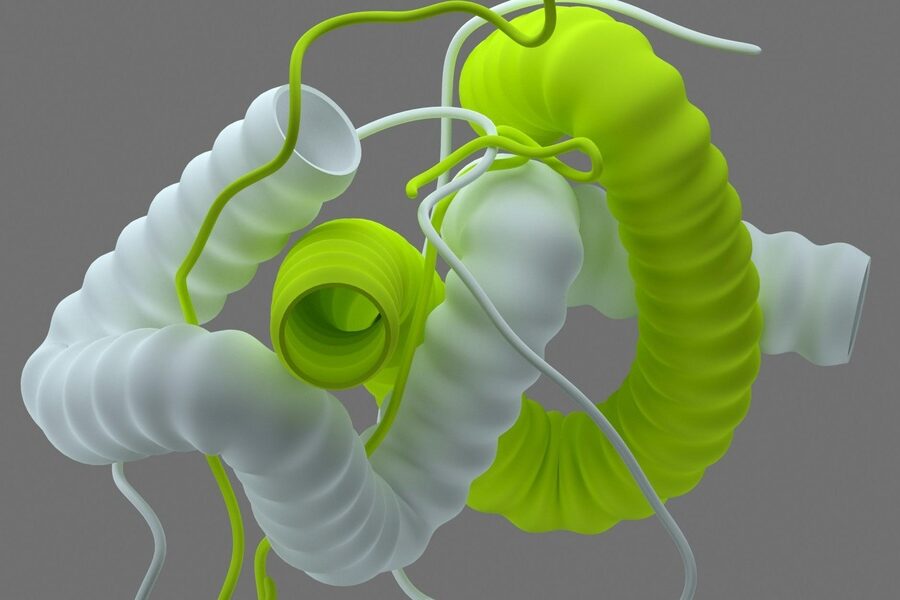

Notable examples include AlphaFold (DeepMind), which in 2020 achieved high accuracy in protein structure prediction, and IDx-DR, an AI system authorized by the FDA in 2018 for diabetic retinopathy screening. These achievements cut lab cycles and informed early-stage research.

Practical use still requires clinical validation, regulatory oversight, and integration into workflows. When adopted responsibly, these tools reduce time-to-insight and increase throughput in drug discovery and diagnostics.

Challenges and Future Directions: What to Watch

Shifting from capabilities to trade-offs, developers and policymakers face social, technical, and environmental constraints. The next facts point to areas where care and research are essential.

7. Interpretability and bias remain major concerns with real-world consequences

Deep models can be opaque and tend to reflect biases present in training data. The Gender Shades study (Joy Buolamwini, MIT Media Lab, 2018) documented severe disparities in commercial gender-classification systems: error rates reached roughly 34% for darker-skinned women versus near 0% for lighter-skinned men on some systems.

Such disparities have downstream impacts in hiring, surveillance, and lending if systems are used without mitigation. That reality has driven dataset auditing, fairness-aware training, and development of interpretability tools.

Mitigations work, but they require deliberate practice: diverse data collection, bias testing across subgroups, and ongoing monitoring in deployment settings.

8. Large models demand big compute — and that has cost and environmental implications

Scaling models increases compute, expense, and energy consumption. Strubell et al. (2019) estimated that training a large NLP model can emit on the order of hundreds of tons of CO2, citing a headline figure of about 284 tCO2e for a large training run.

Models like GPT-3 (~175 billion parameters) require multi-million-dollar training budgets and substantial GPU/TPU resources. These costs affect who can build state-of-the-art models and raise environmental questions.

Researchers are responding with model compression, distillation, pruning, sparse architectures, and more efficient chips to reduce both monetary and carbon cost while retaining performance.

Summary

- Layered neural nets trained by backpropagation, combined with large datasets, are the technical core behind modern AI.

- Breakthroughs and scale—AlexNet/ImageNet, BERT and GPT-3, and AlphaFold—show applications across vision, language, and scientific discovery.

- Data quality often drives real-world performance more than marginal architecture changes; transfer learning can reduce labeling needs.

- Documented harms include biased outcomes (e.g., Gender Shades, 2018) and sizable training carbon footprints (Strubell et al., 2019), which motivate auditing and efficiency work.

- Facts about deep learning point to a dual responsibility: pursue useful applications while investing in fairness, interpretability, and energy-efficient methods.