In 1900 Max Planck introduced the idea of quantized energy to explain blackbody radiation. That small step set off a century of discoveries. By 1905 Einstein used quanta to explain the photoelectric effect, and by the 1927 Solvay Conference physicists were arguing over the meaning of the wavefunction. Planck’s constant, 6.626×10−34 J·s, sits at the heart of that shift.

Why should you care? These advances power devices you use daily — from LEDs to atomic clocks and MRIs — and they shape emerging fields like quantum computing. The contrasts are not just academic.

Quantum physics and classical physics offer contrasting pictures of reality — from determinism and continuity to probability and discreteness — and understanding seven key differences makes clear why both frameworks matter. Below are seven distinct contrasts that explain how the microscopic world changes our predictions, measurements, and technology.

Fundamental Conceptual Differences

Early experiments around 1900–1910 forced a rethinking of what matter and light actually are. This category covers what each theory assumes reality is like, whether quantities vary smoothly or in jumps, and the surprising wave–particle behavior that only shows up in quantum descriptions. These ideas grew from blackbody and photoelectric data to the first electron diffraction patterns.

1. Determinism vs. Probability

Classical physics predicts definite future states from exact initial conditions. Newtonian mechanics and Laplacean determinism portray the world as predictable given enough data.

Quantum physics replaces certainty with probability. Max Born proposed in 1926 that the square magnitude of the wavefunction gives outcome probabilities. You calculate probabilities from wavefunction amplitudes — take the amplitude, square its magnitude, and that gives the chance of a result.

The practical gap matters. Projectile motion is predictable to high precision with classical equations. Radioactive decay, by contrast, follows probabilistic half-lives, so ensembles are predictable but individual decays are not. Experimental design and sensor error budgets reflect that difference.

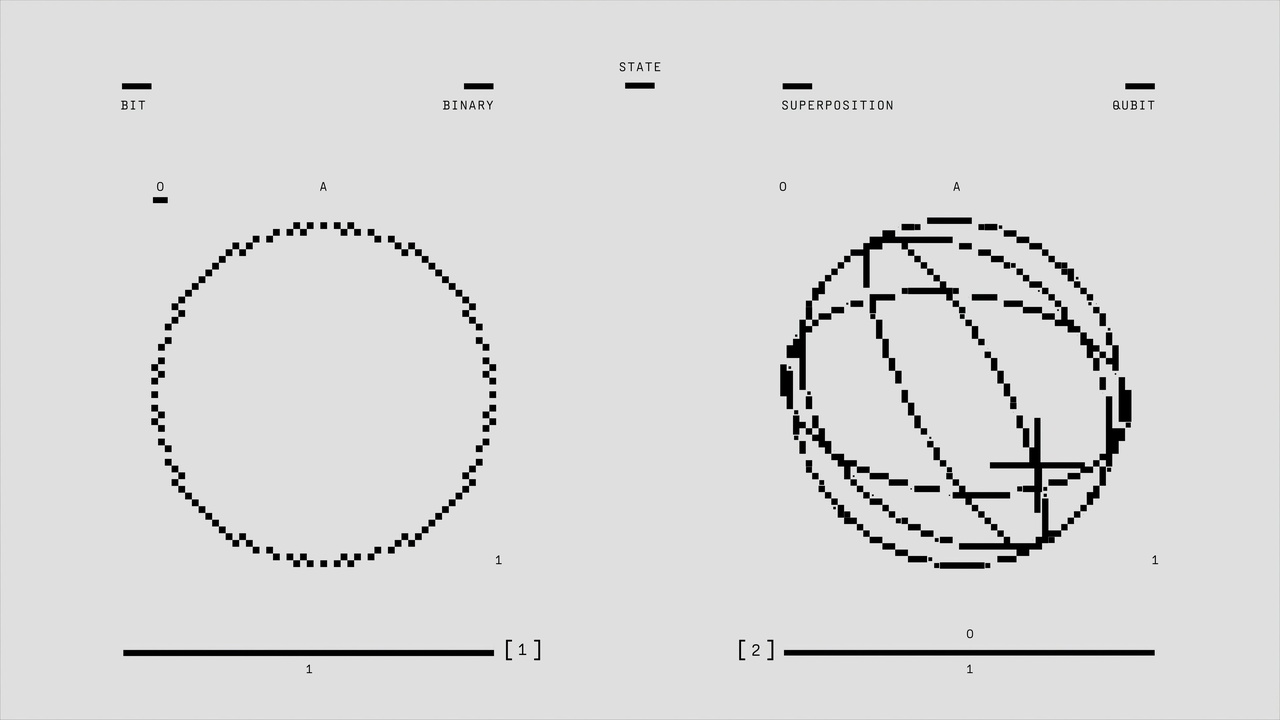

2. Continuous Variables vs. Quantization

Classical quantities such as energy, position, and momentum are continuous in principle. You can imagine smoothly varying a system from one state to another.

Quantum systems often show discrete spectra. Planck introduced quantized energy in 1900, and Bohr’s 1913 model labeled hydrogen energy levels with integers n = 1, 2, 3. Those integers matter: transitions between levels emit photons at specific frequencies.

That discreteness explains why LEDs have particular colors and why atomic clocks use narrow transitions. For example, the cesium standard relies on a transition at 9,192,631,770 Hz. Device engineering exploits those fixed steps.

3. Wave–Particle Duality vs. Separate Entities

In classical thinking, waves and particles are distinct categories. A water wave behaves very differently from a billiard ball.

Quantum objects blur that distinction. Electrons produce interference in the Davisson–Germer experiments of 1927–28 and in double‑slit setups, yet they arrive at detectors as localized impacts. Photons show interference and also knock electrons out of metals as particles.

Engineers take advantage of both aspects. Electron microscopes use wave behavior to resolve atomic structure, while photodetectors count photons as discrete events. The dual nature is a core quantum concept.

Measurement, Information, and Predictability

How we observe a system changes what we can know. Anchored by the Heisenberg uncertainty principle (1927) and Bell’s theorem (1964), this category covers measurement back‑action, limits to simultaneous knowledge, and the strange nonlocal correlations that carry information in new ways.

4. Measurement and the Observer Effect

Measurement plays a fundamentally different role in quantum physics than in classical physics. In classical mechanics, observation can be passive in principle.

Quantum measurement can change the system’s state. Von Neumann formalized the measurement framework: a measurement projects a wavefunction onto an eigenstate, often described as collapse. Experiments show measurement back‑action, so quantum sensors must account for that disturbance.

Techniques like quantum nondemolition measurements attempt to extract information without destroying the observable, but even those approaches must reckon with the principles that make quantum metrology both powerful and delicate.

5. Entanglement and Nonlocal Correlations

Quantum systems can share correlations with no classical analogue. Entangled particles show linked outcomes even when separated by large distances.

Bell’s theorem (1964) provided a testable distinction between quantum predictions and local realism. Alain Aspect’s experiments in 1982 and later loophole‑closing tests violated Bell inequalities, confirming quantum predictions. Those results forced a rethinking of locality and information.

Entanglement is now practical. Quantum key distribution companies (e.g., ID Quantique) commercialize secure links. Academic and industry teams demonstrated photonic teleportation in 1997 and have pushed teleportation over increasing distances since. Protocols exploit entanglement to create communication and computing primitives unavailable to classical systems.

Mathematics, Scale, and Technological Consequences

The tools we use differ as much as the concepts. Classical physics leans on differential equations and fields; quantum theory uses linear operators in Hilbert space. Scale matters too: classical laws work well at macroscopic scales, quantum rules dominate at the atomic and subatomic levels. Those mathematical and scale differences drive practical outcomes.

6. Different Mathematical Languages

Classical physics often expresses dynamics with deterministic differential equations such as Newton’s laws or Maxwell’s equations. Solutions live in function spaces and are propagated in time by ordinary or partial differential equations.

Quantum theory uses wavefunctions, state vectors, and operators acting in Hilbert space. Heisenberg’s matrix mechanics (1925) and Schrödinger’s wave equation (1926) are complementary formulations. Key objects include eigenvalues, eigenstates, and commutators like [x,p] = iħ, which encode noncommuting observables.

That shift matters for computation. Quantum simulation and control lean on linear algebra and operator exponentials rather than only on numerical integration of differential equations. Toolkits like QuTiP reflect that change in numerical approach.

7. Practical Implications and Technologies

Many modern technologies depend on quantum effects that classical physics cannot explain. Semiconductors and transistors rely on band theory, lasers on stimulated emission, and MRI on nuclear magnetic resonance — all quantum in origin.

Historic milestones tie directly to quantum ideas: the transistor was invented at Bell Labs in 1947; laser development accelerated in the 1960s; MRI entered clinical use in the 1970s. Quantum precision shows up in the cesium clock standard at 9,192,631,770 Hz. More recently, companies such as IBM, Google, D‑Wave, and IonQ are developing quantum processors.

Importantly, the differences between quantum physics and classical physics underlie why those devices work and why new quantum technologies are promising. You can’t design a reliable transistor, atomic clock, or quantum key distribution link without thinking in quantum terms.

Summary

Quantum and classical frameworks are complementary tools. One excels at everyday scales; the other captures the microscopic rules that make modern electronics, medical imaging, and emerging quantum systems possible.

- Classical physics gives deterministic, continuous descriptions; quantum theory uses probabilities and often discrete levels.

- Wave–particle duality and entanglement have no classical counterpart and lead to new information protocols like QKD and teleportation.

- Mathematics differs: differential equations and fields versus linear operators, eigenvalues, and commutators — a shift that changes simulation methods and intuition.

- Technologies from the transistor (Bell Labs, 1947) to cesium clocks (9,192,631,770 Hz) and modern quantum processors depend on quantum concepts, not just classical approximations.

Curious to learn more? Visit a university quantum research center or a public exhibit such as the Perimeter Institute or a local university lab open day to see experiments and demonstrations firsthand.