Algorithms shape how software, research and everyday tools solve problems — from finding the fastest route on a map to grouping users by behavior. Getting a compact, comparable view of common approaches makes it easier to match a technique to the task at hand.

There are 20 Examples of Algorithms, ranging from A* (pathfinding) to k-Means (clustering). For each algorithm you’ll find below a concise row organized by Category,Typical use case,Complexity (time/space) so you can quickly scan purpose and performance before diving deeper into the ones that fit your needs.

How do I pick the right algorithm for my problem?

Start by defining the problem type (search, sort, optimization, clustering), data size and constraints (real-time, memory limits). Use the Category and Typical use case columns to narrow candidates, then check Complexity (time/space) to judge scalability; prototype a small dataset to confirm accuracy and performance trade-offs.

When should I prioritize time complexity over space complexity?

Choose time complexity when latency or throughput is critical (real-time systems, user-facing features). Favor space efficiency for memory-constrained environments (embedded devices, large in-memory datasets). The Complexity (time/space) column helps balance those trade-offs with concrete examples.

Examples of Algorithms

| Name | Category | Typical use case | Complexity (time/space) |

|---|---|---|---|

| Quick Sort | sorting | Fast general-purpose in-memory array sorting | Worst-case O(n^2), space O(n) |

| Merge Sort | sorting | Stable sorting for large datasets and external sorting | Worst-case O(n log n), space O(n) |

| Heap Sort | sorting | In-place sorting when worst-case guarantees matter | Worst-case O(n log n), space O(1) |

| Insertion Sort | sorting | Small arrays or nearly-sorted data; inner loop in other algorithms | Worst-case O(n^2), space O(1) |

| Binary Search | searching | Fast lookup in sorted arrays or ranges | Worst-case O(log n), space O(1) |

| Dijkstra | graph | Shortest paths from single source on nonnegative weighted graphs | Worst-case O(E + V log V), space O(V) |

| A* | graph | Pathfinding with heuristics (games, navigation) | Worst-case exponential (O(b^d)), space O(b^d) |

| Kruskal | graph | Minimum spanning tree for sparse graphs | Worst-case O(E log E), space O(V) |

| Bellman-Ford | graph | Shortest paths with negative edges and cycle detection | Worst-case O(V·E), space O(V) |

| k-Means | ML | Unsupervised clustering of numeric data | Worst-case O(I·n·k·d), space O(n·d) |

| Knapsack (0/1 DP) | DP | Exact solution for bounded knapsack problems | Worst-case O(n·W), space O(W) |

| Longest Common Subsequence (LCS) | DP | Comparing sequences (diffs, bioinformatics) | Worst-case O(n·m), space O(n·m) |

| Kadane | DP | Maximum subarray sum in one-dimensional data | Worst-case O(n), space O(1) |

| KMP (Knuth–Morris–Pratt) | string | Fast exact substring search | Worst-case O(n+m), space O(m) |

| Rabin–Karp | string | Multiple-pattern search and rolling-hash matching | Worst-case O(n·m), space O(1) |

| Quickselect | randomized | Select k-th smallest element (order statistics) | Worst-case O(n^2), space O(1) |

| Euclidean algorithm | numeric | Compute greatest common divisor (GCD) | Worst-case O(log min(a,b)), space O(1) |

| RSA | crypto | Public-key encryption, digital signatures | Roughly O(k^3) time, space O(k^2) where k = key bits |

| SHA-256 | crypto | Cryptographic hashing for integrity and signing | Worst-case O(n), space O(1) |

| Huffman coding | compression | Lossless prefix coding for symbols with varied frequencies | Worst-case O(n log n), space O(n) |

Images and Descriptions

Quick Sort

Quick Sort partitions an array around a pivot and recursively sorts partitions. It’s widely used for its excellent average performance and low constant factors, performing in-place swaps but can degrade to quadratic time on bad pivot choices or already-sorted input.

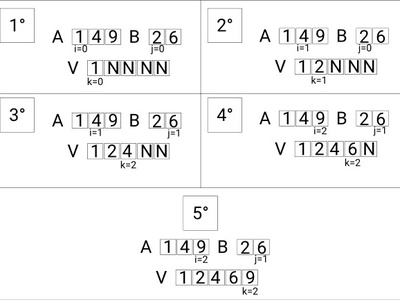

Merge Sort

Merge Sort is a divide-and-conquer algorithm that splits data, sorts halves, and merges them. It guarantees O(n log n) worst-case time and stability, making it reliable for large or external datasets though it needs extra memory.

Heap Sort

Heap Sort builds a binary heap from items and repeatedly extracts the max (or min) to sort. It provides O(n log n) worst-case time with constant extra space, useful where memory is constrained and predictable performance is required.

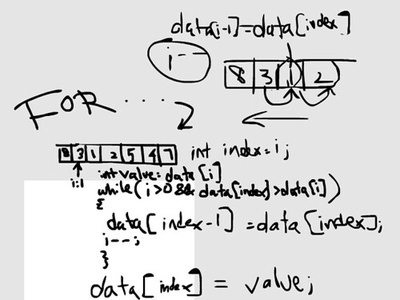

Insertion Sort

Insertion Sort builds a sorted prefix by inserting each new element into its place. It’s simple and fast on small or mostly-sorted arrays, with minimal memory overhead, often used for tiny arrays inside hybrid sorts.

Binary Search

Binary Search repeatedly halves a sorted range to find a target. It’s a fundamental, efficient method for lookups in sorted arrays or ranges, with logarithmic time and constant additional space.

Dijkstra

Dijkstra uses a priority queue to grow shortest-path estimates from a source node when all edge weights are nonnegative. It’s widely used in routing, maps, and network optimization for efficiently computing shortest paths.

A*

A* combines actual path cost and a heuristic estimate to guide search toward a goal. With a good admissible heuristic it is often much faster than uninformed search, commonly used in game AI and route planning.

Kruskal

Kruskal builds a minimum spanning tree by sorting edges and adding them if they don’t create a cycle, using a union-find structure. It’s simple and effective for sparse graphs and network design problems.

Bellman-Ford

Bellman-Ford relaxes edges repeatedly to compute shortest paths even with negative weights and can detect negative cycles. It’s slower than Dijkstra but valuable when negative edges are present.

k-Means

k-Means partitions data into k clusters by iteratively assigning points to nearest centroids and updating them. It’s simple and fast in practice for clustering numeric vectors, though sensitive to initialization and local optima.

Knapsack (0/1 DP)

0/1 Knapsack dynamic programming fills a table by capacity and items to maximize value under weight limits. It gives exact answers in pseudo-polynomial time dependent on numeric capacity, useful in resource allocation.

Longest Common Subsequence (LCS)

LCS computes the longest subsequence common to two sequences via a dynamic table. It’s used in file comparison, version control, and computational biology to measure similarity between strings or sequences.

Kadane

Kadane’s algorithm scans an array maintaining the best subarray ending at each position and the global maximum. It finds the maximum contiguous-sum subarray in linear time with constant extra space, popular in coding interviews and signal analysis.

KMP (Knuth–Morris–Pratt)

KMP preprocesses a pattern to build a failure table, then scans the text without backtracking on the text. It finds all pattern occurrences in linear time and is useful in text processing and search tools.

Rabin–Karp

Rabin–Karp uses a rolling hash to quickly compare substrings against a pattern; average-case is linear but worst-case degrades due to hash collisions. It’s convenient for multiple-pattern or plagiarism detection tasks.

Quickselect

Quickselect partitions like Quick Sort but recurses into the side containing the desired rank, often achieving linear average time. It’s commonly used to find medians or percentiles in unsorted arrays efficiently.

Euclidean algorithm

The Euclidean algorithm repeatedly reduces the GCD problem via remainders. It’s an ancient, extremely efficient method to compute integer GCDs and underpins many number-theoretic and cryptographic routines.

RSA

RSA uses modular exponentiation with large integers and number-theory for public-key encryption and signatures. Security relies on factoring difficulty; it’s fundamental in secure communications though slower than symmetric ciphers.

SHA-256

SHA-256 is a widely used cryptographic hash producing 256-bit digests from arbitrary-length messages. It’s designed to be fast, collision-resistant, and deterministic, used for checksums, digital signatures, and blockchain integrity.

Huffman coding

Huffman constructs an optimal variable-length prefix code from symbol frequencies using a priority queue. It’s widely used in lossless compression (e.g., ZIP, JPEG entropy coding) to reduce average encoded length based on symbol probabilities.